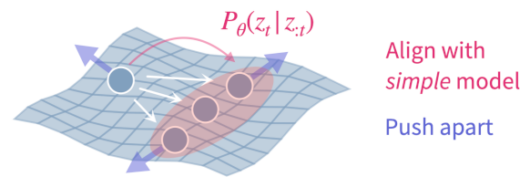

Fabian’s paper “Understanding Self-Supervised Learning via Latent Distribution Matching” was accepted as ICML spotlight! https://arxiv.org/abs/2605.03517 We unify self-supervised learning (SSL) algorithms (e.g., contrastive, VICReg, stopgrad) via latent distribution matching (LDM), which matches an induced latentContinue reading

Cosyne 2026

The lab is at Cosyne 2026 with three posters! Go and check them out if you are there in Lisbon! 2-069: Learning representations of moving objects in a reconstruction-free cortical circuit modelFriday 13th, 13:15-16:15 (AtenaContinue reading

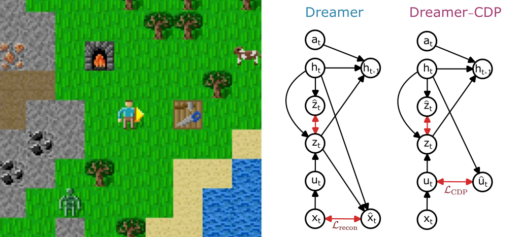

New paper: Dreamer-CDP — Reconstruction-Free World Models for Reinforcement Learning

In our new tiny paper accepted at the ICLR workshop on world models we introduce Dreamer-CDP, a Dreamer variant that learns a world model without reconstructing raw pixel observations. Preprint: https://arxiv.org/abs/2603.07083 Standard model-based reinforcement learningContinue reading

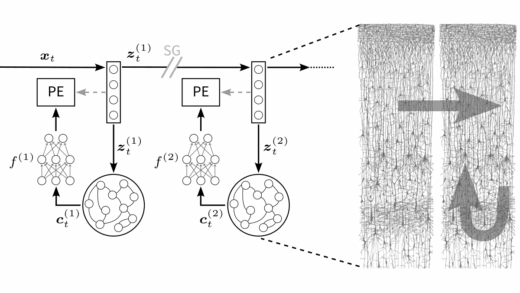

Understanding neural circuit principles for representation learning through joint-embedding predictive architectures

We’re happy to share our new preprint “Understanding neural circuit principles for representation learning through joint-embedding predictive architectures” led by Atena and Manu 🚀 We looked into the question how the cortex learns to representContinue reading

New mates on our crew

This year we gladly welcome four new Kraken Crew members: Fabian, Thomas, Michael, and Rory (jointly with the Friedrich lab). We look forward to what we will learn from your cool research projects and toContinue reading

SNUFA 2025

We are stoked to bring to you another edition of SNUFA our friendly online workshop on spiking neural networks as universal function approximators. This year on 5-6 November 2025, European afternoons. Like every year, weContinue reading

Our work featured by The Transmitter

We are deeply honored to see our work featured in The Transmitter’s “This Paper Changed My Life.” Huge thanks to Dan Goodman for the kind words – and to our amazing community that keeps pushingContinue reading

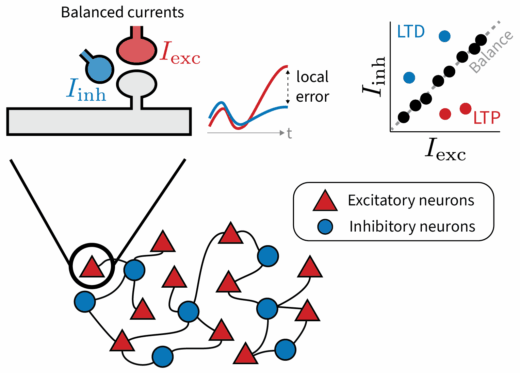

Breaking Balance: Encoding local error signals in perturbations of excitation-inhibition balance

Why does the brain maintain such precise excitatory-inhibitory balance? Our new preprint led by Julian Rossbroich explores a provocative idea: Small, targeted deviations from this balance may serve a purpose: to encode local error signalsContinue reading

Congratulations Tengjun

We are happy that Tengjun, another lab alumnus graduated with flying colors at this home university in China. Tengjun was a visiting student from Zhejiang University in our group from 2023 to 2024. He workedContinue reading

Congratulations Manu

We are thrilled to celebrate the successful PhD defense and graduation of Dr Manu Srinath Halvagal, whose groundbreaking thesis bridges the fields of neuroscience and machine learning. His dissertation, titled “Predictive Self-Supervised Learning in BrainsContinue reading