I am very much looking forward to presenting some recent work with Surya on learning in spiking neural networks at the CoSyNe workshop “Deep learning” and the brain (6.20–6.50p on Monday, 27 February 2017 in “Wasatch”).

Update: Preprint available.

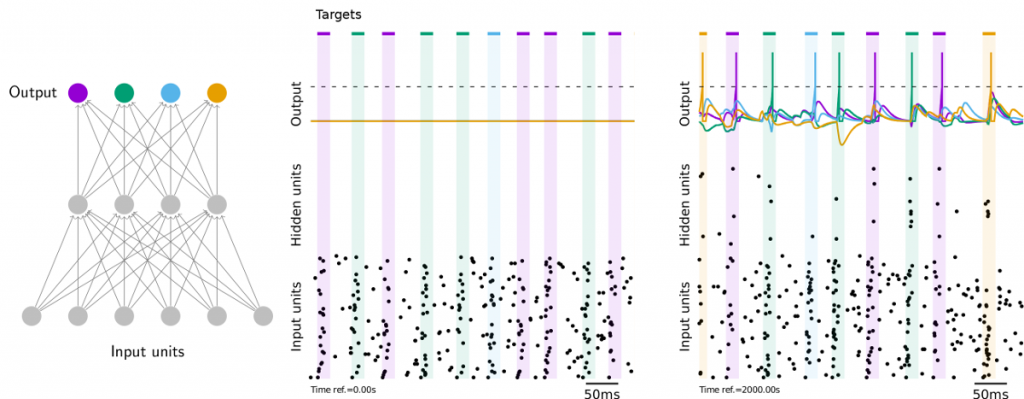

In my talk I will revisit the problem of training multi-layer spiking neural networks using an objective function approach. Due to the non-differentiable nature of spiking neurons and their non-trivial history-dependence induced by the spike reset, it is generally not possible to apply gradient-based learning methods like the ones used to train deep neural networks in machine learning.

During my presentation, I will one-by-one address the core problems typically encountered when trying to train spiking neural networks and introduce Superspike, a new approach to training deterministic spiking neural networks to solve complex and non-linearly separable temporal tasks.

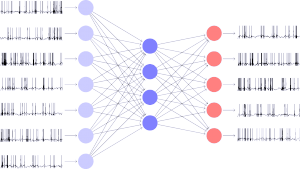

Importantly, Superspike has a direct interpretation as a Hebbian three-factor learning rule. Moreover, I am going to share some of my ideas on how I think similar algorithms could be implemented in neurobiology. For instance, when combined with feedback alignment (Lillicrap et al. 2016) the weight transport problem can be alleviated (see the Figure below for a simple example). With all that said, it would be great if you would care to join me for my talk. I am looking forward to fruitful discussions during the workshop and your feedback.

Dear Friedemann,

I like your sketch of an snn above. Would it be fine for you if I re-use it? .. and if so then would a ” © F. Zenke” next to it suffice, or would you like a paper to be citated with it (e.g. the superspike or other)?

kind regards

M.

Hi Manolis, thank you for asking. It depends a bit on what you want to use it for, but for general use on websites or in presentations, I am fine if you keep the copyright notice © F. Zenke and/or link to my website.

Dear Friedemann,

This workshop seems very interesting to me. Is the video available somewhere?

Best,

F.

Thanks for your interest. No video of this workshop, sorry. But there is a more recent one here https://zenkelab.org/2020/08/online-workshop-spiking-neural-networks-as-universal-function-approximators/