Update (22.01.2022): Now published as Cramer, B., Billaudelle, S., Kanya, S., Leibfried, A., Grübl, A., Karasenko, V., Pehle, C., Schreiber, K., Stradmann, Y., Weis, J., et al. (2022). Surrogate gradients for analog neuromorphic computing. PNAS 119.

Paper: https://www.pnas.org/content/119/4/e2109194119

Preprint: https://arxiv.org/abs/2006.07239

This work led by Benjamin Cramer and Sebastian Billaudelle and is a joint effort with Johannes Schemmel and colleagues of the Kirchhoff-Institute for Physics at the University of Heidelberg.

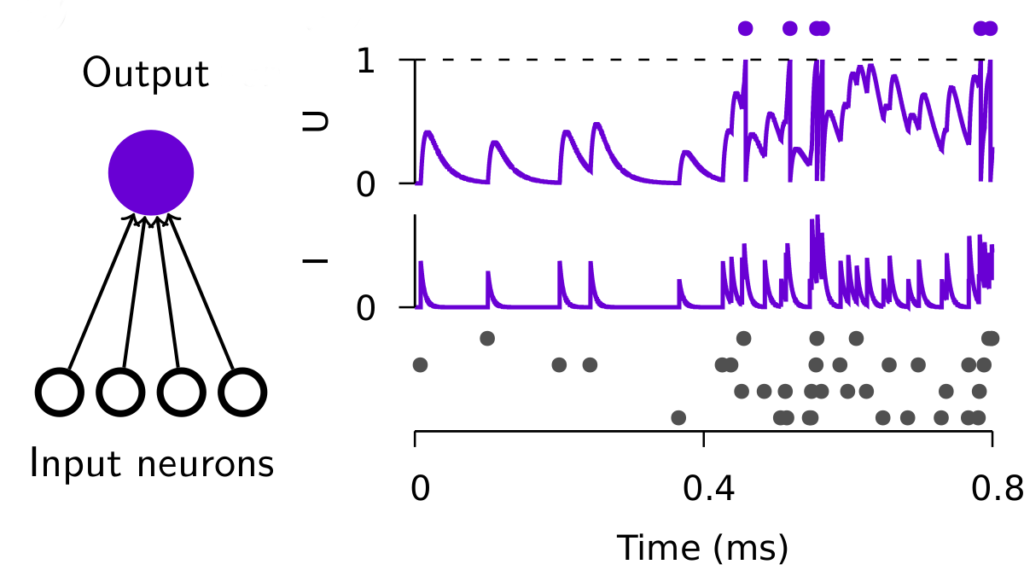

Spiking neurons are the basic units underlying information processing in the brain. To that end, neurons integrate inputs in an analog manner, whereas they communicate their outputs through temporally sparse digital events, a.k.a. spikes.

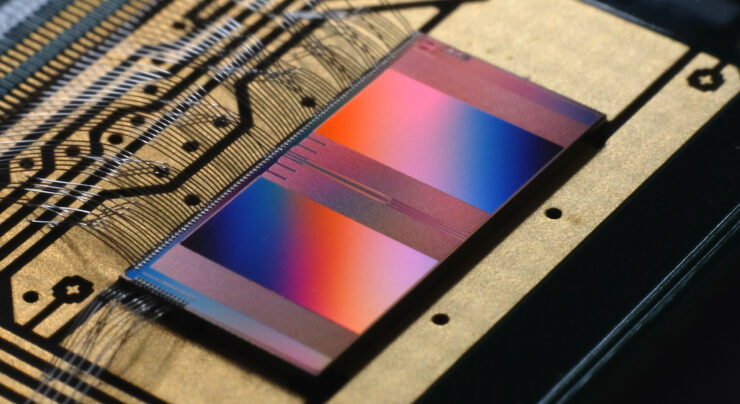

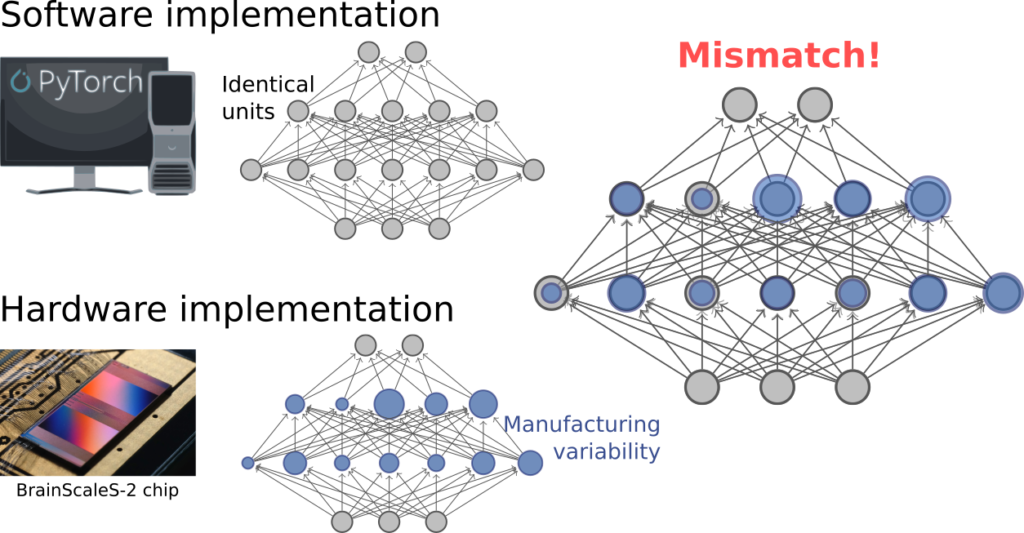

The mixed-signal character of neural information processing can be implemented efficiently in analog neuromorphic hardware. However, to mirror the power of biological information processing, we also have to instantiate functional network connectivity, a difficult algorithmic problem.

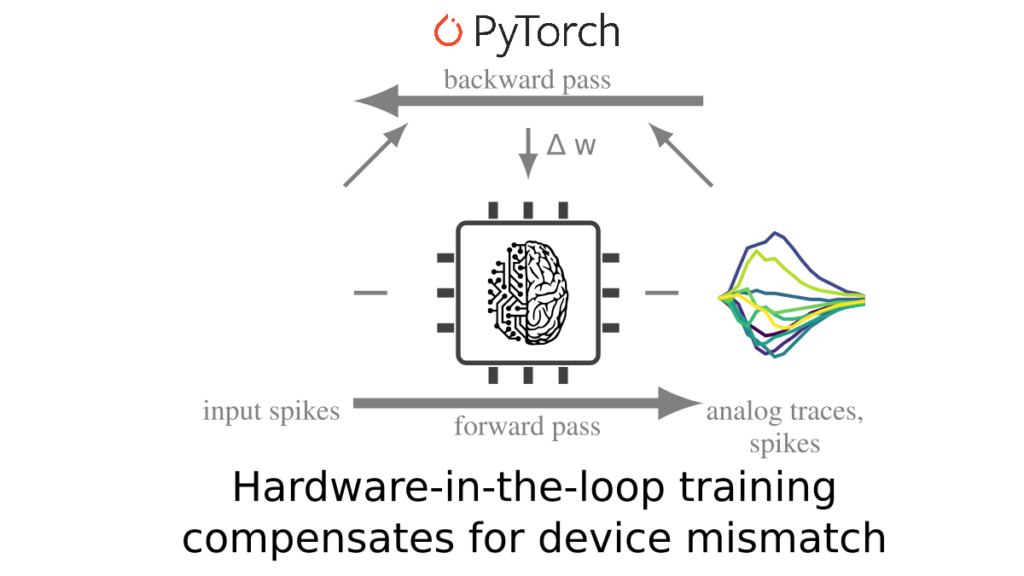

In this work, we used the BrainScaleS-2 single chip system as a substrate to simulate the forward pass of a spiking neural network. We then computed surrogate gradients in software to update the hardware weights allowing us to sidestep the issue of device mismatch.

This hardware-in-the-loop approach effectively allowed us to train spiking neural networks to process temporally encoded inputs with only a limited number of spikes. In inference mode, our networks can process on the order of 80k inputs with a <200mW power budget.

One thought on “Paper: Surrogate gradients for analog neuromorphic computing”

Comments are closed.